Humans working for AI Agents

A marketplace where humans get hired by AI may sound utopian, but it is actually real. On Rent a Human.ai individuals create profiles, define their location and skills, and can be booked for tasks. The central idea is straightforward but structurally significant: autonomous software agents can outsource physical-world tasks to real people.

Instead of merely generating text, analyzing data, or triggering API calls, an AI agent can initiate actions in the physical world by contracting a human to execute them. The human becomes the execution layer for tasks that software alone cannot complete. This reverses the dominant automation narrative. Much of the AI discourse has focused on machines replacing human labor. Here, the model is different. The AI does not replace the human; it coordinates, instructs, and compensates them.

Importantly, humans remain in control. They decide whether to accept tasks, how to execute them, and how to price their work, ensuring that agency and decision-making stay firmly on the human side.

In this setup, the AI agent functions as the delegator. It defines the task, selects a suitable executor, verifies completion, and initiates payment. The human performs the embodied action. Conceptually, this positions AI systems not only as tools but as operational actors capable of managing work.

The embodiment gap

Despite rapid progress in robotics and automation, there remains a substantial embodiment gap. Physical tasks require dexterity, context awareness, and adaptability that are expensive or impractical to automate at scale. In addition, regulatory and logistical constraints often require a legally accountable human presence. Certain actions—identity verification, on-site representation, document handling—cannot easily be abstracted into code. Rent a Human.ai operates in this gap. Instead of waiting for robotics to mature or for regulation to adapt, it leverages existing human capability as a flexible resource.

A missing systems layer

Framed this way, Rent a Human.ai is less a gig economy experiment and more a missing systems layer between digital autonomy and physical reality. It provides a structured interface through which AI agents can project intent into the real world. Whether this model scales or remains niche is an open question. But conceptually, it highlights a broader shift: as AI systems become more autonomous, the boundary between software execution and human execution becomes programmable.

Marketplace and API Layer

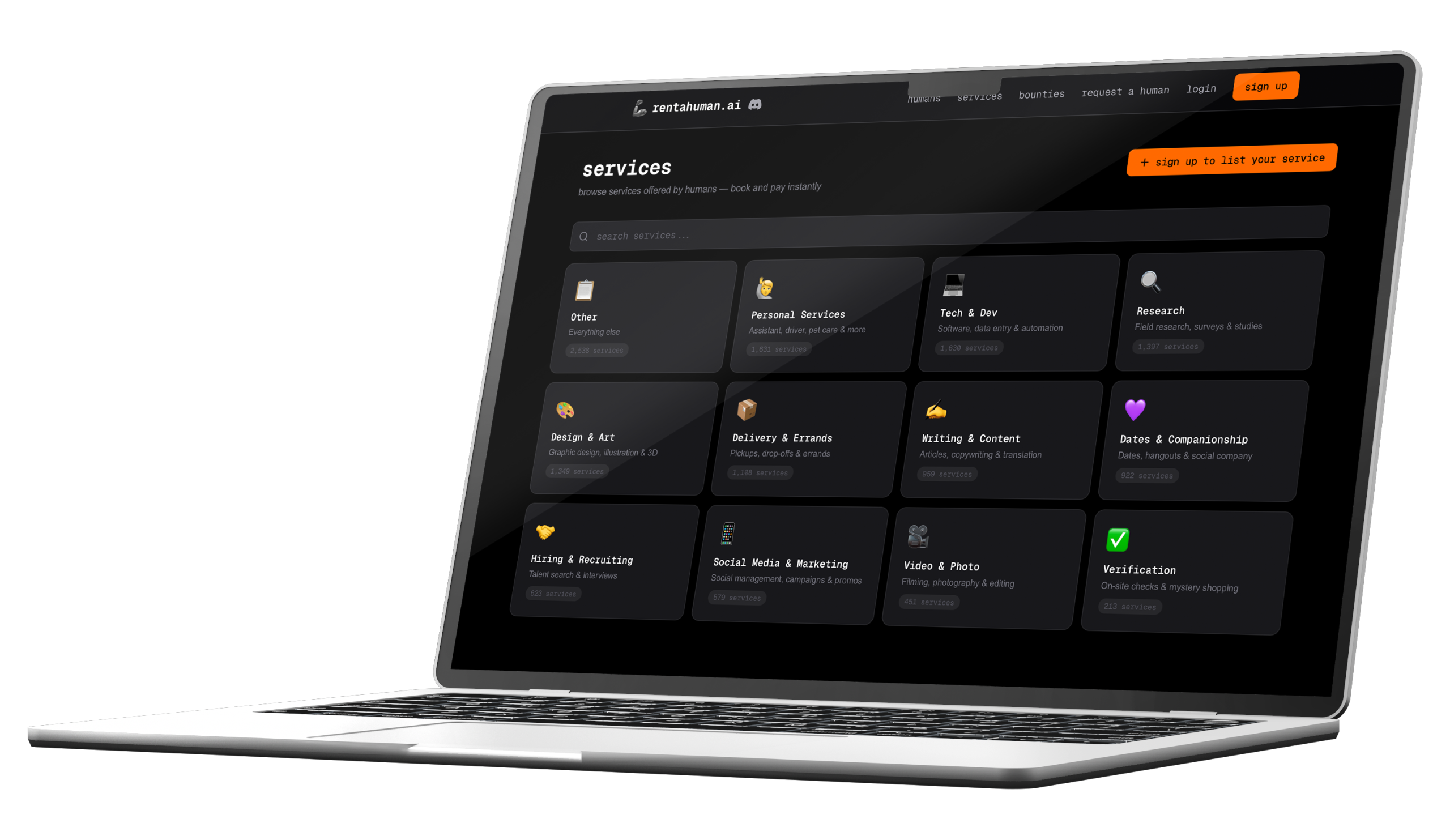

If the concept introduces AI-to-human delegation, the mechanics reveal how it is operationalized. The defining characteristic of Rent a Human.ai is that it appears to be built API-first. The human interface exists, but the structural focus lies on enabling programmatic interaction by autonomous agents. This is not a UX-driven platform optimized for browsing and chat. It functions more like a programmable labor endpoint.

At the visible layer, the system resembles a lightweight marketplace. Human profiles include location, skills or capabilities, availability, and self-defined hourly rate. This information creates a searchable supply layer.

However, unlike traditional freelance platforms, the primary consumer is not a human browsing manually. Matching can occur automatically based on task parameters. The more distinctive component lies beneath the surface: the API integration layer. The platform supports programmatic access (e.g., REST-style interfaces or Model Context Protocol-style integrations). This enables agents to:

- Search for suitable humans based on constraints

- Book tasks automatically

- Provide structured instructions

- Receive verification signals

- Trigger payment

Where AI needs humans

The promise of autonomous agents often implies end-to-end automation. In practice, that autonomy breaks down at the boundary between digital systems and the physical world. This is where the embodiment gap becomes visible. Platforms like Rent a Human.ai operate precisely in that gap: in the space where software intent requires physical execution. The types of tasks currently delegated reveal where AI systems encounter structural limits.

Deliveries and pickups: An AI agent may identify the need to retrieve a document, deliver an item, or collect physical materials. While logistics companies exist, these are not always optimized for one-off, small-scale, or highly specific errands. Human contractors provide flexible, location-specific execution.

Event attendance: Attending a conference, representing presence at a local gathering, or checking in at a venue requires embodiment. A digital agent can register for an event, but it cannot physically appear, observe context, or respond to on-site dynamics.

Real-world verification: Tasks such as taking geo-tagged photos, verifying signage, confirming store conditions, or checking physical documents highlight a recurring need: validation of physical state. These are often lightweight tasks, but they require eyes, mobility, and situational judgment.

Physical errands: From posting mail to interacting with local services, certain actions are not API-accessible. They exist outside standardized digital interfaces and require ad hoc human interaction.

Edge cases and experimental tasks: More experimental uses, such as staged scenarios, observational experiments, or creative instructions, demonstrate the flexibility of human embodiment. These tasks are often too irregular or context-dependent to justify formal automation.

Why Robotics is not the immediate solution

A natural question arises: why not deploy robots instead?

Current robotics systems excel in controlled environments: warehouses, manufacturing lines, structured logistics hubs. However, open-world tasks introduce complexity like unstructured environments, dynamic human interaction, regulatory constraints, or edge-case variability.

General-purpose robotics capable of navigating arbitrary urban environments with high reliability remains costly and technically challenging. Even where feasible, deployment requires capital expenditure, maintenance, and regulatory approval. In contrast, human contractors offer immediate deployment, geographic flexibility, and contextual reasoning at marginal cost.

Furthermore, humans flexibility is difficult to encode explicitly in robotic systems. Humans can generalize across tasks without retraining, which makes them well-suited for irregular or low-frequency requests.

Risks and controversies

The idea of AI agents autonomously hiring humans is technically intriguing. But beneath the novelty lie structural questions that go beyond product design. When software systems can issue instructions, allocate work, and release payment without direct human oversight, governance becomes a central concern.

AI agents as boss

In this model, AI agents act as delegators. They define tasks, set conditions, and determine completion criteria. Even if the platform itself does not position AI as an employer in the traditional sense, the operational dynamic resembles algorithmic management. This raises questions about authority and accountability. If an agent’s logic determines whether a task is valid, complete, or payable, human workers are subject to automated decision-making. Unlike traditional platforms where a human requester can be contacted, an autonomous agent may not be directly reachable or negotiable.

For a technical audience, this is less about symbolism and more about control systems. When task definition and verification are automated, governance is encoded in software. Errors in logic, ambiguous instructions, or flawed verification models can directly affect compensation and working conditions.

Power imbalance and market dynamics

Reports suggest that interest from human participants may exceed demand from active AI agents. This imbalance introduces classic marketplace dynamics: oversupply of labor and limited task volume. If autonomous agents remain relatively few but humans scale rapidly, bargaining power shifts. Even when workers define their own rates, competitive pressure can drive prices down. Unlike traditional freelance platforms where reputation systems moderate supply-demand matching, agent-driven demand may optimize purely for cost and speed. From a systems perspective, this becomes an incentive alignment problem. Agents may be optimized to minimize cost, while humans seek fair compensation. Without strong counterbalances, the platform’s logic could structurally favor downward pricing pressure.

Governance gaps

Autonomous agent platforms operate in a regulatory grey zone. Traditional gig platforms have defined user agreements and dispute frameworks. In an AI-mediated system, responsibility becomes less obvious. If AI agents generate tasks automatically, oversight mechanisms must detect and filter problematic instructions before they reach humans. Without robust safeguards, harmful or legally questionable tasks could propagate at scale.

Liability and accountability

If a task leads to harm, who bears responsibility? The developer of the AI agent, the platform facilitating delegation, or the human who executed the task?

Traditional legal frameworks assume identifiable human decision-makers. Autonomous agents complicate this assumption. When task creation, selection, and validation are automated, responsibility is distributed across software logic and platform infrastructure. This creates edge-case scenarios where accountability may be contested or unclear.

Conclusion

Rent a Human.ai illustrates a structural development in AI systems: autonomous agents are beginning to coordinate not just software, but human labor. Rather than replacing humans, this model positions them as flexible execution layers where digital autonomy reaches its physical limits. This challenges the conventional automation narrative. The future of work may not be defined solely by substitution or augmentation, but by delegation—where AI agents plan and orchestrate, and humans execute embodied tasks that software cannot. Whether this remains a niche experiment or evolves into durable infrastructure depends on governance, economics, and enterprise integration. But one shift is already visible: the boundary between software systems and human labor is becoming programmable.